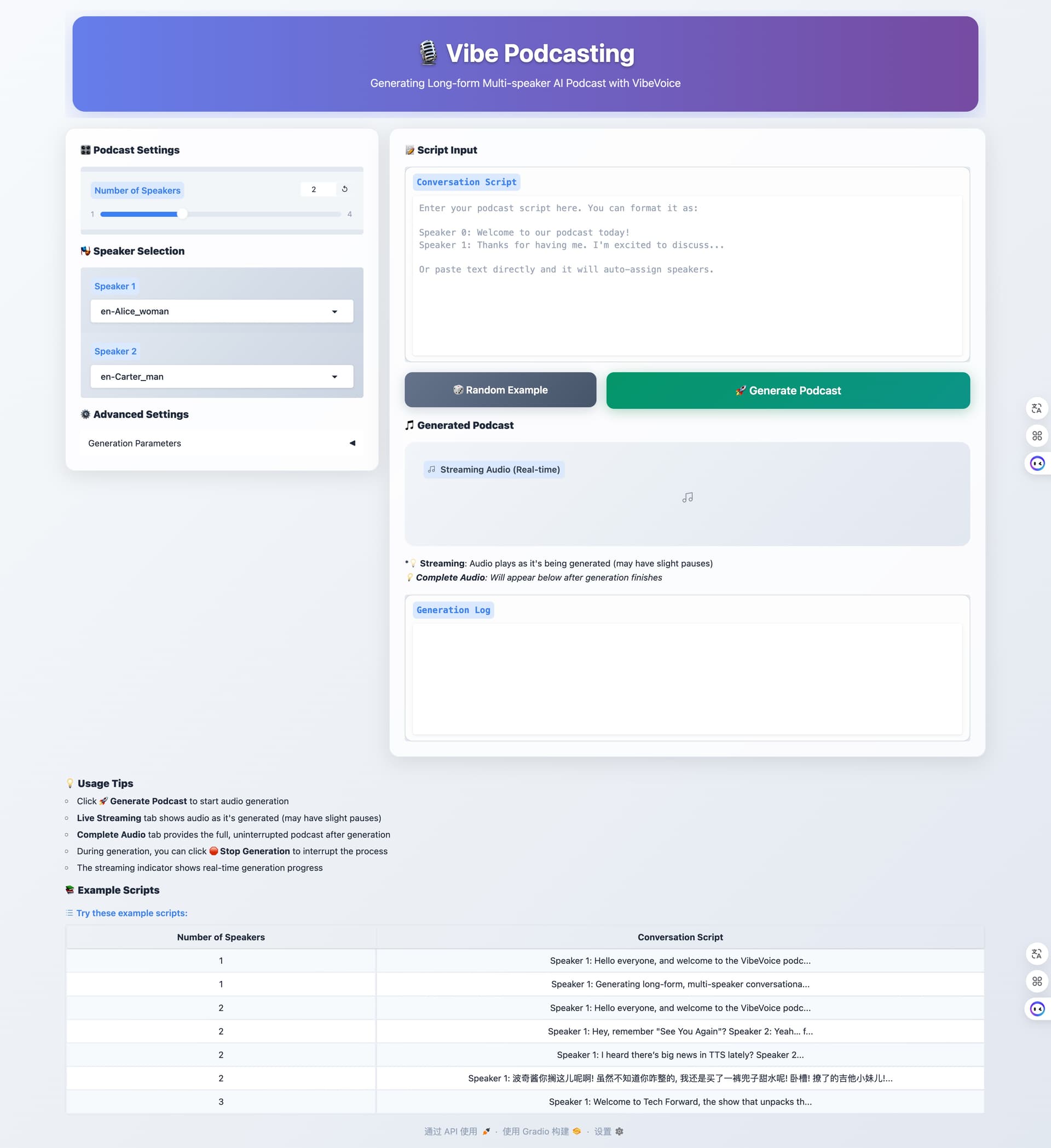

微软昨天发布了最新的开源TTS模型 VibeVoice

今天下班早找个测试机搭了个环境,分享给L站里有需要的佬们。

启动gradio推理前端服务页面,注意7B的模型推理环境大概需要19GB的显存

python demo/gradio_demo.py --model_path WestZhang/ --share - APEX FusedRMSNorm not available, using native implementation

- 🎙️ Initializing VibeVoice Demo with Streaming Support...

- Loading processor & model from WestZhang/

- loading file vocab.json from cache at /root/.cache/huggingface/hub/models--Qwen--Qwen2.5-7B/snapshots/d149729398750b98c0af14eb82c78cfe92750796/vocab.json

- loading file merges.txt from cache at /root/.cache/huggingface/hub/models--Qwen--Qwen2.5-7B/snapshots/d149729398750b98c0af14eb82c78cfe92750796/merges.txt

- loading file tokenizer.json from cache at /root/.cache/huggingface/hub/models--Qwen--Qwen2.5-7B/snapshots/d149729398750b98c0af14eb82c78cfe92750796/tokenizer.json

- loading file added_tokens.json from cache at None

- loading file special_tokens_map.json from cache at None

- loading file tokenizer_config.json from cache at /root/.cache/huggingface/hub/models--Qwen--Qwen2.5-7B/snapshots/d149729398750b98c0af14eb82c78cfe92750796/tokenizer_config.json

- loading file chat_template.jinja from cache at None

- The tokenizer class you load from this checkpoint is not the same type as the class this function is called from. It may result in unexpected tokenization.

- The tokenizer class you load from this checkpoint is 'Qwen2Tokenizer'.

- The class this function is called from is 'VibeVoiceTextTokenizerFast'.

- Special tokens have been added in the vocabulary, make sure the associated word embeddings are fine-tuned or trained.

- loading configuration file WestZhang/config.json

- Model config VibeVoiceConfig {

- "acoustic_tokenizer_config": {

- "causal": true,

- "channels": 1,

- "conv_bias": true,

- "conv_norm": "none",

- "corpus_normalize": 0.0,

- "decoder_depths": null,

- "decoder_n_filters": 32,

- "decoder_ratios": [

- 8,

- 5,

- 5,

- 4,

- 2,

- 2

- ],

- "disable_last_norm": true,

- "encoder_depths": "3-3-3-3-3-3-8",

- "encoder_n_filters": 32,

- "encoder_ratios": [

- 8,

- 5,

- 5,

- 4,

- 2,

- 2

- ],

- "fix_std": 0.5,

- "layer_scale_init_value": 1e-06,

- "layernorm": "RMSNorm",

- "layernorm_elementwise_affine": true,

- "layernorm_eps": 1e-05,

- "mixer_layer": "depthwise_conv",

- "model_type": "vibevoice_acoustic_tokenizer",

- "pad_mode": "constant",

- "std_dist_type": "gaussian",

- "vae_dim": 64,

- "weight_init_value": 0.01

- },

- "acoustic_vae_dim": 64,

- "architectures": [

- "VibeVoiceForConditionalGeneration"

- ],

- "decoder_config": {

- "attention_dropout": 0.0,

- "hidden_act": "silu",

- "hidden_size": 3584,

- "initializer_range": 0.02,

- "intermediate_size": 18944,

- "max_position_embeddings": 32768,

- "max_window_layers": 28,

- "model_type": "qwen2",

- "num_attention_heads": 28,

- "num_hidden_layers": 28,

- "num_key_value_heads": 4,

- "rms_norm_eps": 1e-06,

- "rope_scaling": null,

- "rope_theta": 1000000.0,

- "sliding_window": null,

- "torch_dtype": "bfloat16",

- "use_cache": true,

- "use_mrope": false,

- "use_sliding_window": false,

- "vocab_size": 152064

- },

- "diffusion_head_config": {

- "ddpm_batch_mul": 4,

- "ddpm_beta_schedule": "cosine",

- "ddpm_num_inference_steps": 20,

- "ddpm_num_steps": 1000,

- "diffusion_type": "ddpm",

- "head_ffn_ratio": 3.0,

- "head_layers": 4,

- "hidden_size": 3584,

- "latent_size": 64,

- "model_type": "vibevoice_diffusion_head",

- "prediction_type": "v_prediction",

- "rms_norm_eps": 1e-05,

- "speech_vae_dim": 64

- },

- "model_type": "vibevoice",

- "semantic_tokenizer_config": {

- "causal": true,

- "channels": 1,

- "conv_bias": true,

- "conv_norm": "none",

- "corpus_normalize": 0.0,

- "disable_last_norm": true,

- "encoder_depths": "3-3-3-3-3-3-8",

- "encoder_n_filters": 32,

- "encoder_ratios": [

- 8,

- 5,

- 5,

- 4,

- 2,

- 2

- ],

- "fix_std": 0,

- "layer_scale_init_value": 1e-06,

- "layernorm": "RMSNorm",

- "layernorm_elementwise_affine": true,

- "layernorm_eps": 1e-05,

- "mixer_layer": "depthwise_conv",

- "model_type": "vibevoice_semantic_tokenizer",

- "pad_mode": "constant",

- "std_dist_type": "none",

- "vae_dim": 128,

- "weight_init_value": 0.01

- },

- "semantic_vae_dim": 128,

- "tie_word_embeddings": false,

- "torch_dtype": "bfloat16",

- "transformers_version": "4.51.3"

- }

- loading weights file WestZhang/model.safetensors.index.json

- Instantiating VibeVoiceForConditionalGenerationInference model under default dtype torch.bfloat16.

- Generate config GenerationConfig {}

- Instantiating Qwen2Model model under default dtype torch.bfloat16.

- Instantiating VibeVoiceAcousticTokenizerModel model under default dtype torch.bfloat16.

- Instantiating VibeVoiceSemanticTokenizerModel model under default dtype torch.bfloat16.

- Instantiating VibeVoiceDiffusionHead model under default dtype torch.bfloat16.

- Loading checkpoint shards: 100%|███████████████████████████████████████████████████████████████████████████████████████| 10/10 [00:02<00:00, 3.73it/s]

- All model checkpoint weights were used when initializing VibeVoiceForConditionalGenerationInference.

- All the weights of VibeVoiceForConditionalGenerationInference were initialized from the model checkpoint at WestZhang/.

- If your task is similar to the task the model of the checkpoint was trained on, you can already use VibeVoiceForConditionalGenerationInference for predictions without further training.

- Generation config file not found, using a generation config created from the model config.

- Language model attention: flash_attention_2

- Found 9 voice files in /workspace/VibeVoice/demo/voices

- Available voices: en-Alice_woman, en-Carter_man, en-Frank_man, en-Mary_woman_bgm, en-Maya_woman, in-Samuel_man, zh-Anchen_man_bgm, zh-Bowen_man, zh-Xinran_woman

- Loaded example: 1p_Ch2EN.txt with 1 speakers

- Loaded example: 1p_abs.txt with 1 speakers

- Loaded example: 2p_goat.txt with 2 speakers

- Loaded example: 2p_music.txt with 2 speakers

- Loaded example: 2p_short.txt with 2 speakers

- Loaded example: 2p_yayi.txt with 2 speakers

- Loaded example: 3p_gpt5.txt with 3 speakers

- Skipping 4p_climate_100min.txt: duration 100 minutes exceeds 15-minute limit

- Skipping 4p_climate_45min.txt: duration 45 minutes exceeds 15-minute limit

- Successfully loaded 7 example scripts

- 🚀 Launching demo on port 7860

- 📁 Model path: WestZhang/

- 🎭 Available voices: 9

- 🔴 Streaming mode: ENABLED

- 🔒 Session isolation: ENABLED

- * Running on local URL: http://0.0.0.0:7860

官方给自带3个中文音色

|